Authors: Krupa Srivatsan and Bart Lenaerts

How adversaries evade detections and the case for predictive intelligence

INTRO

Generative AI, particularly Large Language Models (LLM), is transforming cybersecurity. Adversaries are attracted to GenAI as it lowers entry barriers to create deceiving content. Actors do this to enhance the efficacy of their malicious techniques like social engineering and detection evasion

This blog provides examples of malicious GenAI usage like deepfakes, chatbot automation and obfuscation. More importantly, it also makes a case for new levels of telemetry and the case for predictive threat intelligence capable of disrupting actors before they execute their attacks.

Example 1: Deepfakes for Crypto Scams

At the end of 2024, the FBI warned1 that criminals were using generative AI to commit fraud on a larger scale, making their schemes more believable. GenAI reduces the time and effort needed to deceive targets by creating new trustworthy content. These tools can correct human errors that might otherwise signal fraud, and while creating synthetic content isn’t illegal, it can facilitate crimes, like fraud and extortion. Criminals use AI-generated text, images, audio and videos to enhance social engineering, phishing and financial fraud schemes.

In September 2024, Infoblox Threat Intel discovered deep fake videos some with over 180,000 viewers as cybercriminals jumped at the opportunity to use the U.S. presidential debate as fodder for a new cryptocurrency scam. Victims were lured by deep fake videos claiming to show Elon Musk presenting the theme of cryptocurrency and telling viewers that they could win big by investing in cryptocurrency during the streamed event.

Picture 1: Example of a deepfake cryptocurrency scam video discovered by Infoblox2

The videos contained QR codes linked to domains made to look like the candidates or cryptocurrency platforms, adding further deception. All of this demonstrates how criminals take advantage of high-profile current events, social media, and GenAI to make money. While YouTube took down account after account, some videos were available for over 12 hours, tricking an untold number of users. But by blocking access to suspicious domains, users can be protected from these clever scams.

Example 2: AI-Powered Chatboxes

Actors often pick their victims carefully by gathering insights on their interests. Their initial research is used to craft the smishing message and trigger the victim into a conversation with them. Personal notes like “How can we bring you a happy mood today?” or “I read your last social post and wanted to become friends” are some examples our intel team discovered (step 1 in picture 2). While some of these messages may be extended with AI-modified pictures, what matters is that actors invite their victims to the next step, which is a conversation on Telegram or another actor controlled medium, far away from security controls (step 2 in picture 2).

Picture 2: Sample AI-driven conversation

Once the victim is on the new medium, the actor uses several tactics to continue the conversation, such as invites to local golf tournaments, Instagram following or AI-generated images. These AI bot-driven conversations go on for weeks and include additional steps, like asking for a thumbs-up on YouTube or even a social media repost. At this moment, the actor is trying to assess their victims and see how they respond. Sooner or later, the actor will show some goodwill and create a fake account. Each time the victim reacts positively to the actor’s request, the amount of currency on the fake account will increase. Later, the actor may even request small amounts of investment money, promising an ROI of more than 25 percent. When the victim asks to collect their gains (step 3 in picture 2), the actor starts the real play by requesting access to the victim’s crypto account. At this moment, the pig butchering comes to an end and the actor steals all the crypto money in the account.

Observed characteristics of GenAI usage in chatboxes

The combinations of these fingerprints allow threat intel researchers to observe emerging campaigns, track back the malicious infrastructure and even predict where the actor will execute its next attack. |

Example 3: Code Obfuscation and Evasion

Threat actors are using GenAI not only for creating human readable content. Several news outlets explored how GenAI assists actors in obfuscating their malicious codes. Earlier this year Infosecurity Magazine published details of how threat researchers discovered social engineering campaigns spreading VIP Keylogger and 0bj3ctivityStealer malware, both of which involved malicious code being embedded in image files. With a goal to improve the efficiency of their campaign, actors are repurposing and stitching together existing malware via GenAI to evade detection. This approach also assists them in gaining velocity in setting up threat campaigns and reducing the skills needed to construct infection chains. Industry threat research teams estimate evasion increments of 11% for email threats while other security vendors estimate that GenAI flipped their own malware classifier model’s verdicts 88% of the time. Clearly actors are making progress in their lucrative initiatives.

Making the case for modernizing threat research

As AI driven attacks pose plenty of detection evasion challenges, defenders need to look beyond traditional tools like sandboxing, URL scanning or indicators derived from incident forensics. One of these opportunities can be found by tracking pre-attack activities instead of waiting for the last malicious payload.

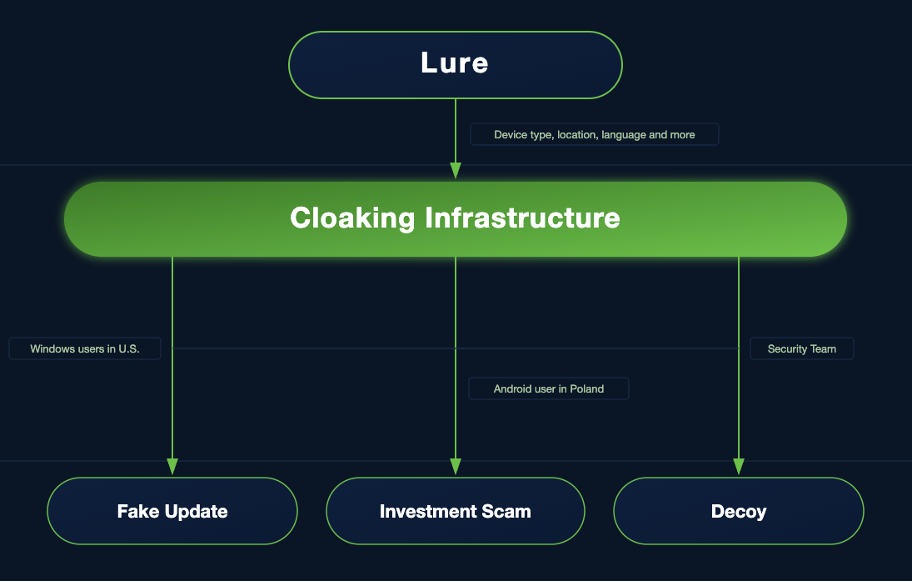

Just like your standard software development lifecycle, threat actors go through multiple stages before launching attacks. First, they develop or generate new variants for the malicious code using GenAI. Next, they set up the infrastructure like email delivery networks, attractive content or untraceable traffic distribution systems. Often this happens in combination with domain registrations or worse hijacking of domains. Finally, the attacks go into “production” meaning the domains become weaponized, ready to deliver malicious payload.

This is the stage where traditional security tools attempt to detect and stop threats because it involves easily accessible endpoints or networks directly in the customer’s environment. But because of GenAI, this detection moment is often not effective as the actor is continuously altering their payloads to bypass signature or known behavioral based detections.

Predictive Intelligence based on DNS Telemetry

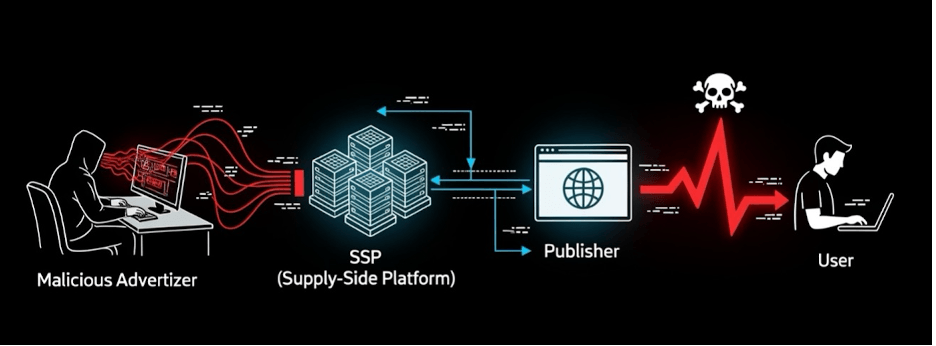

At Infoblox, finding actors and their malicious infrastructure before they attack is at the core of our team’s mission. Starting from a singular domain registration combined with worldwide DNS telemetry and decades of threat expertise, Infoblox Threat Intel leverages cutting-edge data science to identify even the stealthiest actors. Some of these – like Vextrio Viper3 – are not even executing the attack but enable thousands of affiliates to deliver otherwise seemingly unrelated malicious payloads or content.

Infoblox threat analysts intercept actor activities at the early stages of the attack as new malicious infrastructure is configured. By using data like new domain registrations, DNS record details and query resolutions, Infoblox leverages data that is prone to GenAI alteration. Why? Because DNS Data is transparent to multiple stakeholders (Domain Owner, Registrar, Domain Server, Client, Destination) and needs to be 100% correct to make the connection work. Simply said, DNS protocol is an essential component of the internet that is hard to fool.

DNS has another advantage; Domains and the DNS infrastructure need to be configured well in advance. At Infoblox we track creation or transfer of domains on a continuous basis. This highly reliable data proceeds any adversarial usage of GenAI used at the delivery stage when altering the content. For that reason, DNS can provide true predictive intelligence with high reliability.

Conclusion

The evolving landscape of AI and the impact on security is significant. With the right approaches and strategies, such as use of DNS-focused threat intelligence, companies can get ahead of these risks and ensure that they don’t become patient zero.

Footnotes

- Criminals Use Generative Artificial Intelligence to Facilitate Financial Fraud

- No, Elon Musk was not in the U.S. presidential debate

- Infoblox Threat Intel Actor Profile Vextrio Viper